Diver Detection

Relevant Publications:

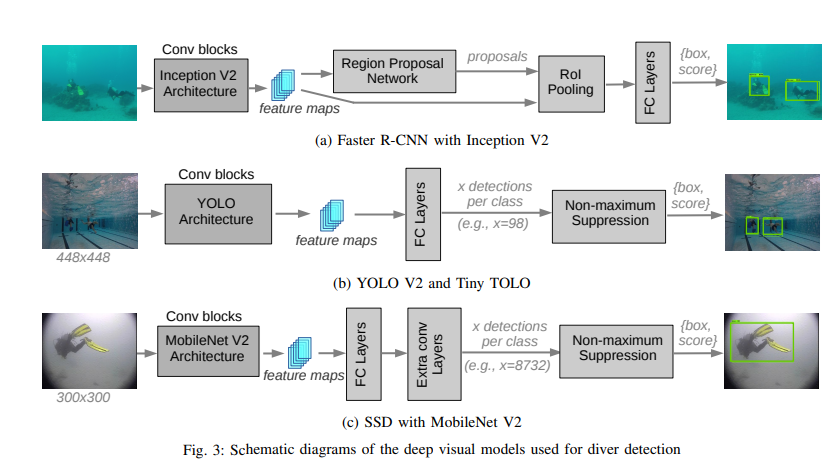

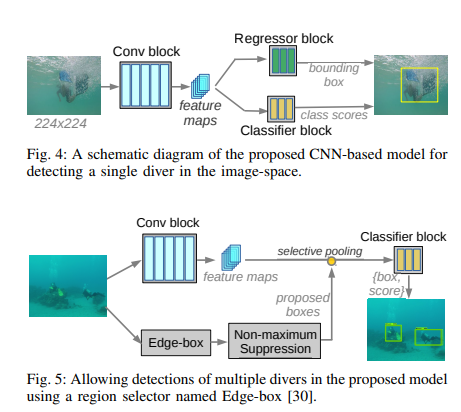

RAL-19 IROS-21 IROS-21(Prediction)The task of diver detection is challenging, but key to underwater human-robot interaction. For an AUV and a diver to effectively collaborate, the AUV must know the diver’s location. My earliest work on this subject was under the direction of my fellow PhD student Md. Jahidul Islam, developing a novel diver detection algorithm and comparing it to other commonly used methods.

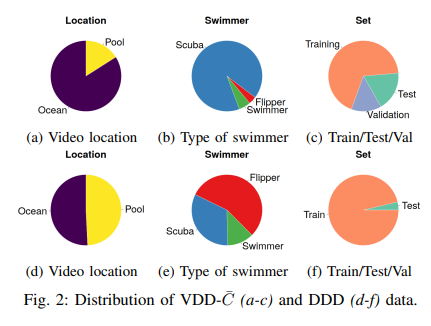

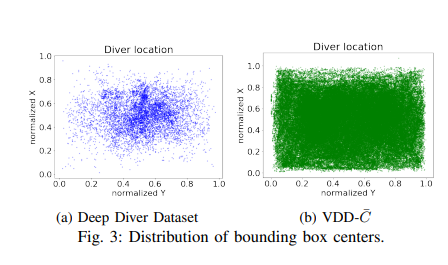

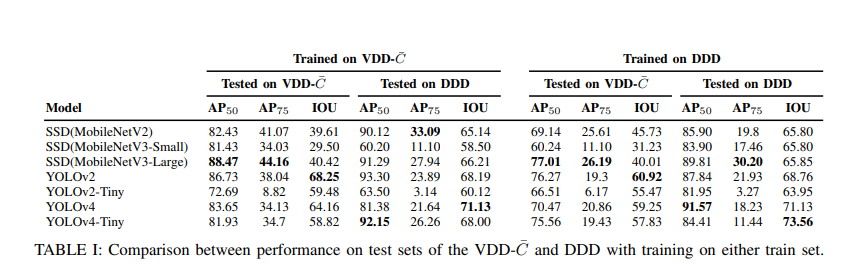

After this, I worked with another PhD student, Karin de Langis, on developing a new dataset for diver detection called VDD-C, improving on the DDD (Deep Diver Dataset).

VDD-C consists of over 100,000 images of divers in video contexts, with all kinds of equipment, positions, multiple divers occluding one another, etc.

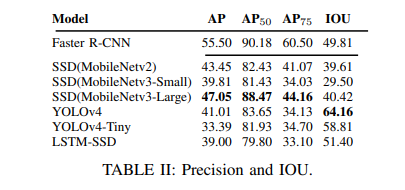

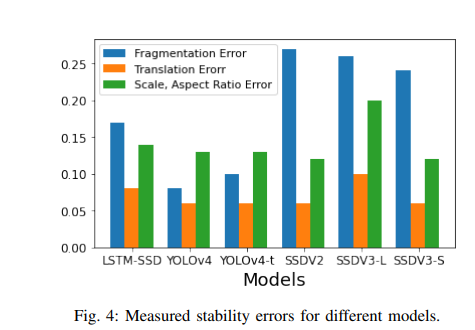

The increased rigerousness of this dataset improved the state of the art performance for diver detectors, simply by providing enough data to train with. However, we also explored the use of video detection methodologies on diver detection for the first time, and profiled common object detectors not only on their per-image accuracy, but on the extent to which they struggled in video contexts.

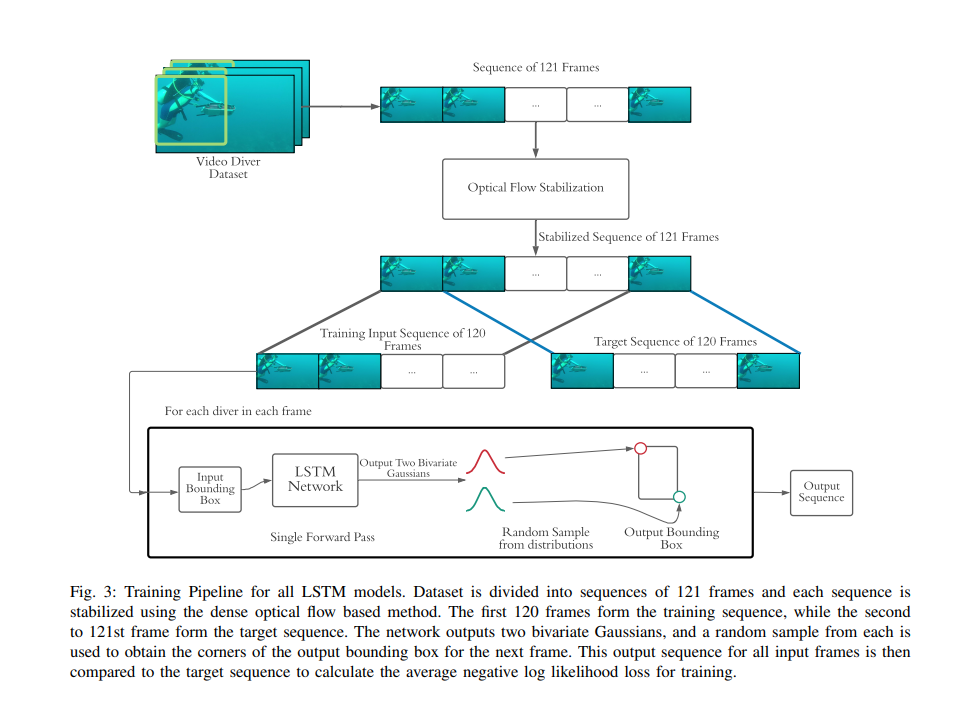

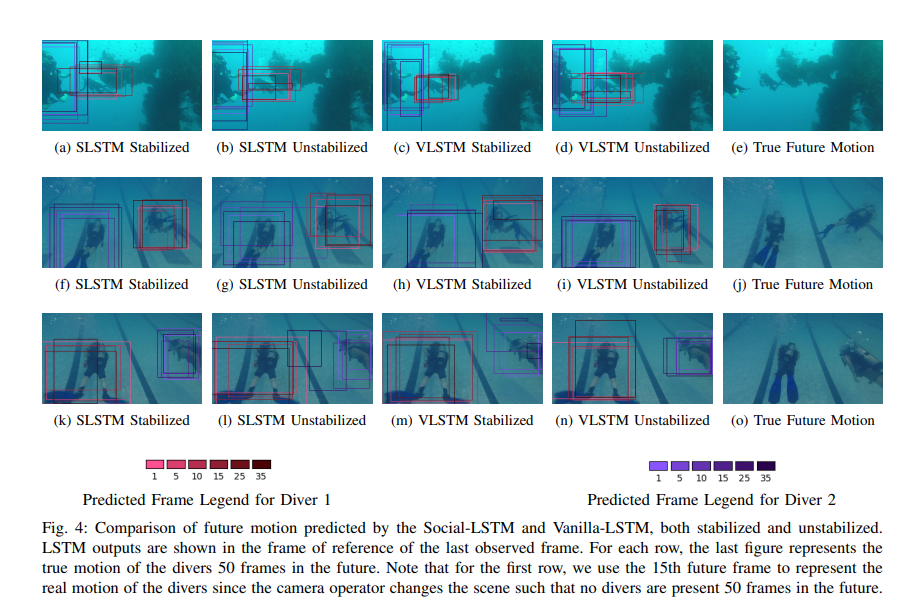

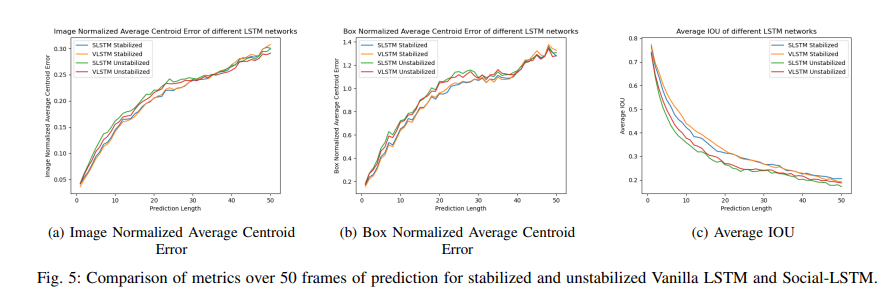

I also worked for a time on extending the capabilities of diver detectors into predicting the future motion of divers. Undergraduate student Tanmay Agarwal, under my direction, adapated some LSTMs designed for pedestrian motion prediction to diver motion prediction, giving reasonbly accuracte predictions up to 2 seconds in the future.

Since that time, my work on diver perception has shifted from detection to body pose estimation (no publications as yet). I have been using DeepLabCut to develop a diver pose estimator which has allowed the development of higher-order algorithms for underwater HRI.